How to Measure Generative Visibility: KPIs That Matter in the AI Era

- April 21, 2026

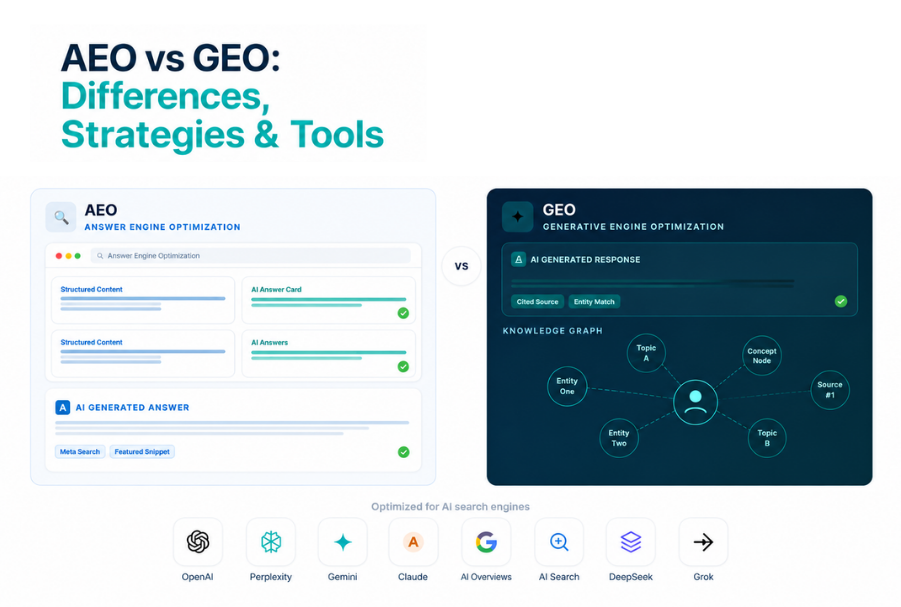

The rules of search visibility are being rewritten. Instead of scrolling through links, users now receive direct, synthesized responses from AI systems. This shift has given rise to Generative Engine Optimization (GEO)—a new discipline focused on ensuring your brand appears in AI-generated responses across tools like ChatGPT, Google’s AI Overviews, and other conversational interfaces.

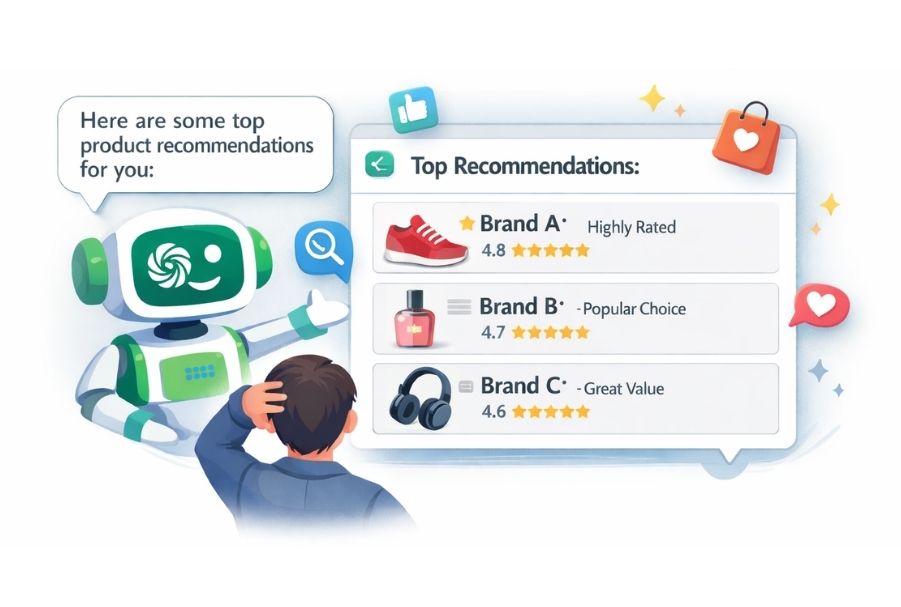

When a potential customer asks ChatGPT, Gemini, or Perplexity to recommend a project management tool, a cybersecurity vendor, or a skincare brand — your Google ranking means nothing if your brand isn't in the answer. Welcome to the age of Generative Engine Optimization (GEO), where the question isn't just can people find you? It's does the AI mention you?

In this blog, we’ll break down the most important KPIs for AI search performance, explain how to track them, and show you how to effectively approach measuring brand presence in AI answers.

What is Generative Visibility?

Generative visibility refers to how often, how prominently, and in what context your brand appears within AI-generated responses.

Unlike traditional search:

- There are no fixed rankings

- Multiple sources are blended into one answer

- Visibility is contextual, not positional

That’s why tracking GEO performance metrics is essential—because influence in AI answers is the new currency of discoverability.

Why Traditional SEO Metrics are No Longer Enough

For twenty years, the "Holy Trinity" of search metrics was simple: Rankings, Impressions, and Clicks. However, AI engines operate on a "zero-click" philosophy. They synthesize information from multiple sources to give the user an immediate answer. If a user asks, "What is the best CRM for a 50-person startup?" and ChatGPT provides a detailed comparison including your brand, you have achieved "Generative Visibility"—even if the user never clicks through to your website.

Traditional analytics won't show you that win. To capture your true reach, you need to transition from a URL-centric model to an Entity-centric model.

The Core KPIs for Generative Engine Optimization Metrics

1. AI Answer Presence Rate

What it is: The percentage of relevant queries for which your brand appears in an AI-generated response.

How to track it: Create a set of 50–100 target queries — questions your ideal customers would realistically ask an AI assistant. Run them across ChatGPT, Gemini, Perplexity, and Claude. Log when your brand is mentioned versus absent.

Why it matters: This is your foundational GEO metric. Everything else builds on knowing whether you exist in AI-generated answers at all.

Benchmark goal: Aim for presence in 30–40% of relevant queries within your niche in the first 6 months of an active GEO strategy.

2. Citation Frequency & Source Authority

What it is: How often AI tools cite your content as a source, and the quality/context of those citations.

How to track it: Monitor which of your URLs appear as cited sources in AI responses. Tools like Perplexity directly surface citations — track which pages are cited, for what types of queries, and how often.

Why it matters: Citations are the generative equivalent of backlinks. When an AI cites your white paper, your research, or your product page, it's a strong signal of source authority — and it drives referral intent even without a direct click.

Key sub-metrics:

- Number of unique pages cited across AI platforms

- Query categories that trigger your citations

- Citation context (is your brand cited as a primary recommendation or a footnote?)

3. Brand Sentiment Accuracy in AI Responses

What it is: How accurately and positively AI models describe your brand, products, or services.

How to track it: Query AI tools with brand-specific prompts like "What is [Brand]?" or "What are the pros and cons of [Product]?" Score responses across three dimensions: accuracy, sentiment (positive/neutral/negative), and completeness.

Why it matters: AI hallucinations and outdated training data can cause models to misrepresent your brand. If GPT-4 is describing a product you discontinued two years ago, that's an active reputation risk — and you won't catch it without deliberately measuring it.

4. Share of Voice in AI Answers (AI-SoV)

What it is: Among all brands mentioned in AI responses to your target queries, what percentage of mentions belong to you?

How to track it: For each target query, log every brand mentioned in the AI response — not just yours. Calculate your mentions as a percentage of total brand mentions across all responses.

Why it matters: This is the generative equivalent of Share of Voice in media monitoring. It contextualizes your presence. Being mentioned in 40% of queries sounds great — until you realize your top competitor is mentioned in 80%.

This is arguably the most competitive intelligence-rich KPI in the GEO toolkit.

5. Prompt-to-Conversion Attribution

What it is: Tracking how users who arrive via AI-referred traffic convert compared to other channels.

How to track it: AI tools like Perplexity and some Bing AI features do pass referral traffic. Tag these sessions in GA4 with UTM parameters where possible. Beyond direct referrals, use post-purchase surveys asking "How did you first hear about us?" — you'll find an increasing number of customers citing an AI recommendation.

Why it matters: Justifying GEO investment requires connecting it to revenue. Even rough attribution data — like noticing that branded search spikes after AI-referral sessions — helps build the business case.

6. Content Freshness & Re-indexing Lag

What it is: How quickly new or updated content about your brand gets reflected in AI-generated responses.

How to track it: After publishing a significant piece of content, a press release, or a product update, re-run your benchmark queries monthly. Track how long it takes for the updated information to surface in AI answers.

Why it matters: AI models are trained on periodic data snapshots. Understanding the lag between publishing content and seeing it reflected in AI answers helps you plan your GEO content calendar more strategically.

7. Query Coverage Depth

What it is: How far down the customer journey your brand appears in AI answers — not just awareness queries, but consideration and decision-stage questions.

How to track it: Segment your target queries by funnel stage:

- Awareness: "What is [category]?"

- Consideration: "Best [category] tools for [use case]"

- Decision: "[Brand A] vs [Brand B]" or "Is [Brand] worth it?"

Measure your AI Answer Presence Rate separately for each stage.

Why it matters: Many brands are present in broad awareness queries but invisible at the decision stage — exactly where a sale is won or lost. Query coverage depth reveals where your GEO strategy has gaps.

Building Your GEO Measurement Stack

You don't need a proprietary tool to start. Here's a practical stack:

Manual tracking (to start):

- A spreadsheet with 50–100 target queries

- Weekly query runs across 3–4 AI platforms

- A simple tagging system: Mentioned / Not Mentioned / Cited

Scale with tools: Platforms like Profound, Otterly.AI, Peec.ai, and AthenaHQ are specifically built to monitor brand presence in AI-generated answers. They automate query running, track mentions over time, and provide competitive benchmarking.

Integrate with existing analytics: Use GA4 to identify AI referral traffic, combine with brand search volume trends (a spike in branded searches often correlates with AI mentions), and layer in social listening to catch user conversations about AI recommendations.

A Note on Reporting Cadence

GEO metrics move more slowly than traditional SEO metrics. AI training cycles, content indexing lags, and model updates mean you shouldn't expect week-over-week swings. A monthly review of your core GEO KPIs, with a quarterly competitive benchmarking session, is the right cadence for most brands.

Conclusion

Measuring brand presence in AI answers isn't optional anymore — it's a baseline competency for any marketing team that takes search seriously. The brands building GEO measurement frameworks today will have a compounding advantage as AI-generated answers become the default surface for discovery, research, and purchase decisions.

Start small: pick your 50 most important queries, run them across two or three AI platforms this week, and document what you find. That first snapshot is your baseline — and your first GEO metric.

The AI era of search doesn't reward those who wait for perfect measurement tools. It rewards those who start measuring imperfectly, learn fast, and optimize early.

FAQ: Common Questions on GEO Metrics

No results found - Please try again.

.png)

.avif)

%201.avif)

.avif)

.jpeg)

.avif)

.avif)

.avif)